Q&A: Dina Katabi on a “smart” home with actual intelligence

MIT professor is designing the next generation of smart wireless devices that will sit in the background, gathering and interpreting data, rather than being worn on the body.

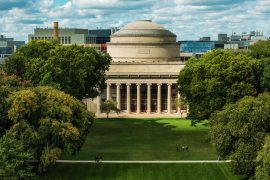

Dina Katabi is designing the next generation of smart wireless devices that will sit in the background of a given room, gathering and interpreting data, rather than being wrapped around one’s wrist or worn elsewhere on the body. In this Q&A, Katabi, the Thuan (1990) and Nicole Pham Professor at MIT, discusses some of her recent work.

Q: Smartwatches and fitness trackers have given us a new level of personalized health information. What’s next?

A: The next frontier is the home, and building truly-intelligent wireless systems that understand people’s health and can interact with the environment and other devices. Google Home and Alexa are reactive. You tell them, “wake me up,” but they sound the alarm whether you’re in bed or have already left for work. My lab is working on the next generation of wireless sensors and machine-learning models that can make more personalized predictions.

We call them the invisibles. For example, instead of ringing an alarm at a specific time, the sensor can tell if you’ve woken up and started making coffee. It knows to silence the alarm. Similarly, it can monitor an elderly person living alone and alert their caregiver if there’s a change in vital signs or eating habits. Most importantly, it can act without people having to wear a device or tell the sensors what to do.

Q: How does an intelligent sensing system like this work?

A: We’re developing “touchless” sensors that can track people’s movements, activities, and vital signs by analyzing radio signals that bounce off their bodies. Our sensors also communicate with other sensors in the home, which allows them to analyze how people interact with appliances in their home. For example, by combining user location data in the home with power signals from home smart meters, we can tell when appliances are used and measure their energy consumption. In all cases, the machine-learning models we’re co-developing with the sensors analyze radio waves and power signals to extract high-level information about how people interact with each other and their appliances.

Q: What’s the hardest part of building “invisible” sensing systems?

A: The breadth of technologies involved. Building “invisibles” requires innovations in sensor hardware, wireless networks, and machine learning. Invisibles also have strict performance and security requirements.

Q: What are some of the applications?

A: They will enable truly “smart” homes in which the environment senses and responds to human actions. They can interact with appliances and help homeowners save energy. They can alert a caregiver when they detect changes in someone’s health. They can alert you or your doctor when you don’t take your medication properly. Unlike wearable devices, invisibles don’t need to be worn or charged. They can understand human interactions, and unlike cameras, they can pick up enough high-level information without revealing individual faces or what people are wearing. It’s much less invasive.

Q: How will you integrate security into the physical sensors?

A: In computer science, we have a concept called challenge-response. When you log into a website, you’re asked to identify the objects in several photos to prove that you’re human and not a bot. Here, the invisibles understand actions and movements. So, you could be asked to make a specific gesture to verify that you’re the person being monitored. You could also be asked to walk through a monitored space to verify that you have legitimate access.

Q: What can invisibles measure that wearables can’t?

A: Wearables track acceleration but they don’t understand actual movements; they can’t tell whether you walked from the kitchen to the bedroom or just moved in place. They can’t tell whether you’re sitting at the table for dinner or at your desk for work. The invisibles address all of these issues.

Current deep-learning models are also limited whether wireless signals are collected from wearable or background sensors. Most handle images, speech, and written text. In a project with the MIT-IBM Watson AI Lab, we’re developing new models to interpret radio waves, acceleration data, and some medical data. We’re training these models without labeled data, in an unsupervised approach, since non-experts have a difficult time labeling radio waves, and acceleration and medical signals.

Q: You’ve founded several startups, including CodeOn, for faster and secure networking, and Emerald, a health analytics platform. Any advice for aspiring engineer-entrepreneurs?

A: It’s important to understand the market and your customers. Good technologies can make great companies, but they are not enough. Timing and the ability to deliver a product are essential.