Case Studies in Social and Ethical Responsibilities of Computing

The MIT Case Studies in Social and Ethical Responsibilities of Computing (SERC) aims to advance new efforts within and beyond the Schwarzman College of Computing. The specially commissioned and peer-reviewed cases are brief and intended to be effective for undergraduate instruction across a range of classes and fields of study, and may also be of interest for computing professionals, policy specialists, and general readers.

The series editors interpret “social and ethical responsibilities of computing” broadly. Some cases focus closely on particular technologies, others on trends across technological platforms. Others examine social, historical, philosophical, legal, and cultural facets that are essential for thinking critically about present-day efforts in computing activities. Special efforts are made to solicit cases on topics ranging beyond the United States and that highlight perspectives of people who are affected by various technologies in addition to perspectives of designers and engineers.

New sets of case studies, produced with support from the MIT Press’ Open Publishing Services program, will be published twice a year and made available via the Knowledge Futures Group’s PubPub platform. The SERC case studies are made available for free on an open-access basis, under Creative Commons licensing terms. Authors retain copyright, enabling them to re-use and re-publish their work in more specialized scholarly publications.

If you have suggestions for a new case study or comments on a published case, the series editors would like to hear from you! Please reach out to serc-cases@mit.edu.

Summer 2025

How Generative AI Works and How It Fails

Censorship of Misinformation and Freedom of Speech on Social Media

Creeping Questions: Computer Ethics and the 1980s Morris Worm

Past Issues

The Puzzling Persistence of Age Progression Technology by Sharrona Pearl

“Explainable” AI Has Some Explaining to Do by Ho Chit Siu, Ashley Suh, Nora Smith, and Isabelle Hurley

Addictive Intelligence: Understanding Psychological, Legal, and Technical Dimensions of AI Companionship by Robert Mahari and Pat Pataranutaporn

From Mining to E-waste: The Environmental and Climate Justice Implications of the Electronics Hardware Life Cycle by Lelia Hampton, Madeline Schlegel, Ellie Bultena, Jasmin Liu, Anastasia Dunca, Mrinalini Singha, Sungmoon Lim, Lauren Higgins, and Christopher Rabe

Complete Delete: In Practice, Clicking ‘Delete’ Rarely Deletes. Should it? by Simson Garfinkel

Integrals and Integrity: Generative AI Tries to Learn Cosmology by Bruce A. Basset

How Interpretable Is “Interpretable” Machine Learning? by Ho Chit Siu, Kevin Leahy, and Makai Mann

AI’s Regimes of Representation: A Community-Centered Study of Text-to-Image Models in South Asia by by Rida Qadri, Renee Shelby, Cynthia L. Bennett, and Remi Denton

Pretrial Risk Assessment on the Ground: Algorithms, Judgments, Meaning, and Policy by Cristopher Moore, Elise Ferguson, and Paul Guerin

To Search and Protect? Content Moderation and Platform Governance of Explicit Image Material by Mitali Thakor, Sumaiya Sabnam, Ransho Ueno, and Ella Zaslow

Emotional Attachment to AI Companions and European Law by Claire Boine

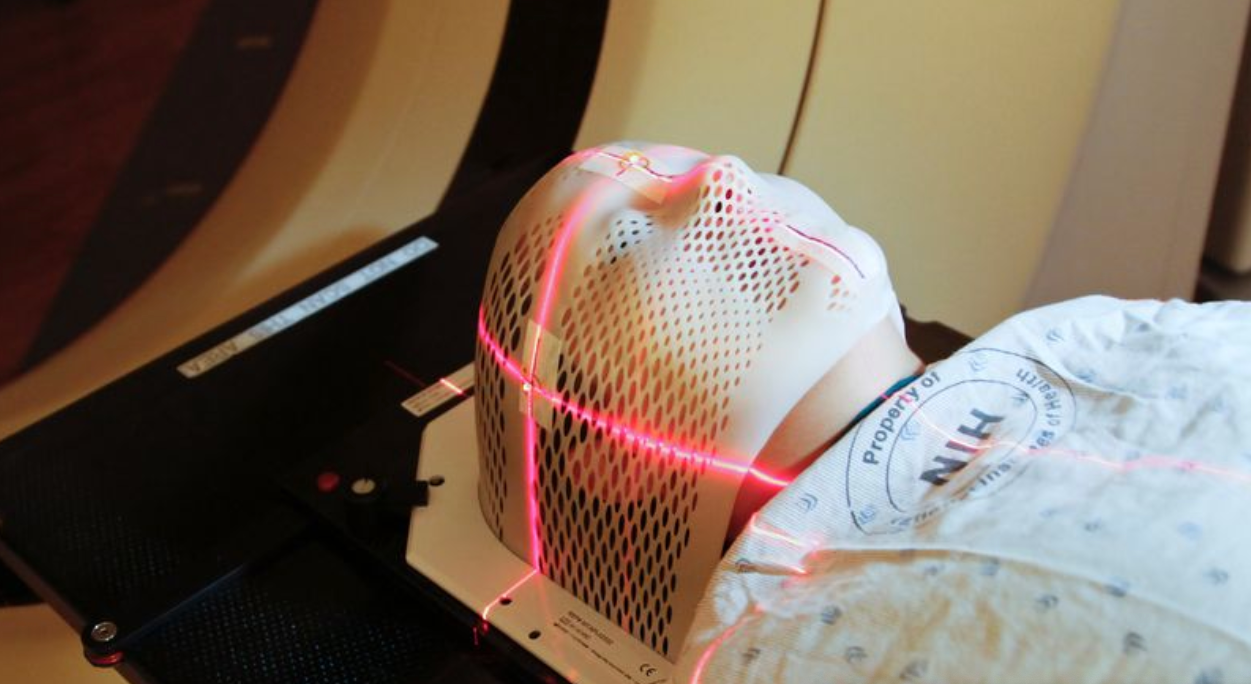

Algorithmic Fairness in Chest X-ray Diagnosis: A Case Study by Haoran Zhang, Thomas Hartvigsen, and Marzyeh Ghassemi

The Right to Be an Exception to a Data-Driven Rule by Sarah H. Cen and Manish Raghavan

Twitter Gamifies the Conversation by C. Thi Nguyen, Meica Magnani, and Susan Kennedy

“Porsche Girl”: When a Dead Body Becomes a Meme by Nadia de Vries

Privacy and Paternalism: The Ethics of Student Data Collection by Kathleen Creel and Tara Dixit

Differential Privacy and the 2020 US Census by Simson Garfinkel

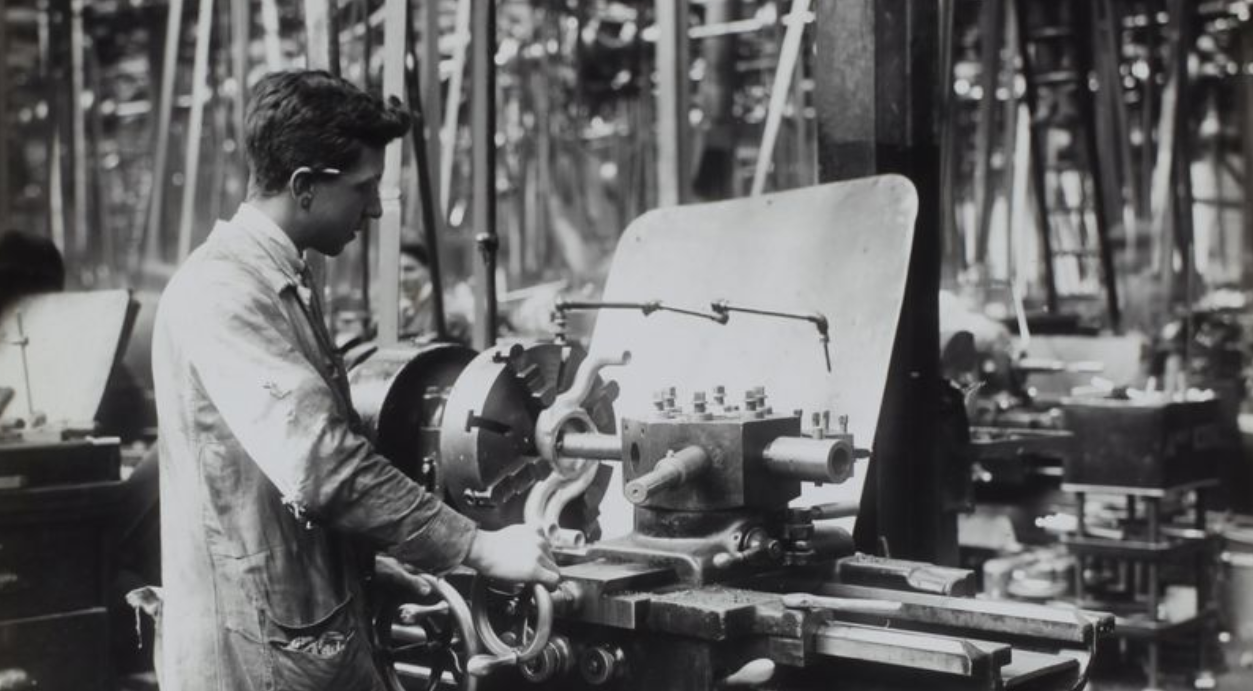

The Puzzle of the Missing Robots by Suzanne Berger and Benjamin Armstrong

The Cloud Is Material: On the Environmental Impacts of Computation and Data Storage by Steven Gonzalez Monserrate

Algorithmic Redistricting and Black Representation in US Elections by Zachary Schutzman

Hacking Technology, Hacking Communities: Codes of Conduct and Community Standards in Open Source by Christina Dunbar-Hester

Understanding Potential Sources of Harm throughout the Machine Learning Life Cycle by Harini Suresh and John Guttag

Public Debate on Facial Recognition Technologies in China by Tristan G. Brown, Alexander Statman, and Celine Sui

The Case of the Nosy Neighbors by Johanna Gunawan and Woodrow Hartzog

Who Collects the Data? A Tale of Three Maps by Catherine D’Ignazio and Lauren Klein

The Bias in the Machine: Facial Recognition Technology and Racial Disparities by Sidney Perkowitz

The Dangers of Risk Prediction in the Criminal Justice System by Julia Dressel and Hany Farid