A better way to match 3D volumes

By mapping the volumes of objects, rather than their surfaces, a new technique could yield solutions to computer graphics problems in animation and CAD.

In computer graphics and computer-aided design (CAD), 3D objects are often represented by the contours of their outer surfaces. Computers store these shapes as “thin shells,” which model the contours of the skin of an animated character but not the flesh underneath.

This modeling decision makes it efficient to store and manipulate 3D shapes, but it can lead to unexpected artifacts. An animated character’s hand, for example, might crumple when bending its fingers — a motion that resembles how an empty rubber glove deforms rather than the motion of a hand filled with bones, tendons, and muscle. These differences are particularly problematic when developing mapping algorithms, which automatically find relationships between different shapes.

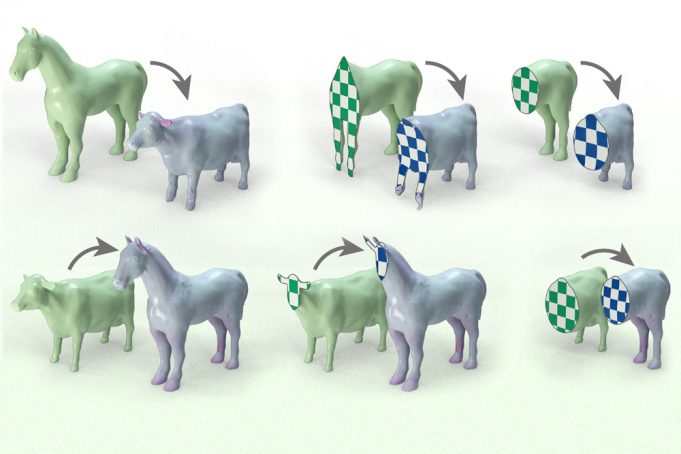

To address these shortcomings, researchers at MIT have developed an approach that aligns 3D shapes by mapping volumes to volumes, rather than surfaces to surfaces. Their technique represents shapes as tetrahedral meshes that include the mass inside a 3D object. Their algorithm determines how to move and stretch the corners of tetrahedra in a source shape so it aligns with a target shape.

Because it incorporates volumetric information, the researchers’ technique is better able to model fine parts of an object, avoiding the twisting and inversion typical of surface-based mapping.

“Switching from surfaces to volumes stretches the rubber glove over the whole hand. Our method brings geometric mapping closer to physical reality,” says Mazdak Abulnaga, an electrical engineering and computer science (EECS) graduate student who is lead author of the paper on this mapping technique.

The approach Abulnaga and his collaborators developed was able to align shapes more effectively than baseline methods, leading to high-quality shape maps with less distortion than competing alternatives. Their algorithm was especially well-suited for challenging mapping problems where the input shapes are geometrically distinct, such as mapping a smooth rabbit to LEGO-style rabbit made of cubes.

The technique could be useful in a number of graphics applications. For instance, it could be used to transfer the motions of a previously animated 3D character onto a new 3D model or scan. The same algorithm can transfer textures, annotations, and physical properties from one 3D shape to another, with applications not just in visual computing but also for computational manufacturing and engineering.

Joining Abulnaga on the paper are Oded Stein, a former MIT postdoc who is now on the faculty at the University of Southern California; Polina Golland, a Sunlin and Priscilla Chou Professor of EECS, a principal investigator in the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL), and the leader of the Medical Vision Group; and Justin Solomon, an associate professor of EECS and the leader of the CSAIL Geometric Data Processing Group. The research will be presented at the ACM SIGGRAPH conference.

Shaping an algorithm

Abulnaga began this project by extending surface-based algorithms so they could map shapes volumetrically, but each attempt failed or produced implausible maps. The team quickly realized that new mathematics and algorithms were needed to tackle volume mapping.

Most mapping algorithms work by trying to minimize an “energy,” which quantifies how much a shape deforms when it is displaced, stretched, squashed, and sheared into another shape. These energies are often borrowed from physics, which uses similar equations to model the motion of elastic materials like gelatin.

Even when Abulnaga improved the energy in his mapping algorithm to better model volume physics, the method didn’t produce useful matchings. His team realized one reason for this failure is that many physical energies — and most mapping algorithms — lack symmetry.

In the new work, a symmetric method doesn’t care which order the shapes come in as input; there is no distinction between a “source” and “target” for the map. For example, mapping a horse onto a giraffe should produce the same matchings as mapping a giraffe onto a horse. But for many mapping algorithms, choosing the wrong shape to be the source or target leads to worse results. This effect is even more pronounced in the volumetric case.

Abulnaga documented how most mapping algorithms don’t use symmetric energies.

“If you choose the right energy for your algorithm, it can give you maps that are more realizable,” Abulnaga explains.

The typical energies used in shape alignment are only designed to map in one direction. If a researcher tries to apply them bidirectionally to create a symmetric map, the energies no longer behave as expected. These energies also behave differently when applied to surfaces and volumes.

Based on these findings, Abulnaga and his collaborators created a mathematical framework that researchers can use to see how different energies will behave and to determine which they should choose to create a symmetric map between two objects. Using this framework, they built a mapping algorithm that combines the energy functions for two objects in a way that guarantees symmetry throughout.

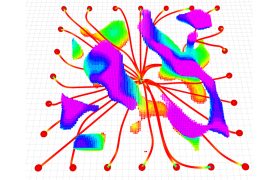

A user feeds the algorithm two shapes that are represented as tetrahedral meshes. Then the algorithm computes two bidirectional maps, from one shape to the other and back. These maps show where each corner of each tetrahedron should move to match the shapes.

“The energy is the cornerstone of this mapping process. The model tries to align the two shapes, and the energies prevent it from making unexpected alignments,” he says.

Achieving accurate alignments

When the researchers tested their approach, it created maps that better aligned shape pairs and which were higher quality and less distorted than other approaches that work on volumes. They also showed that using volume information can yield more accurate maps even when one is only concerned with the map of the outer surface.

However, there were some cases where their method fell short. For instance, the algorithm struggles when the shape alignment requires a great deal of volume changes, such as mapping a shape with a filled interior to one with a cavity inside.

In addition to addressing that limitation, the researchers want to continue optimizing the algorithm to reduce the amount of time it takes. The researchers are also working on extending this method to medical applications, bringing in MRI signals in addition to shape. This can help bridge the mapping approaches used in medical computer vision and computer graphics.

“A theoretical analysis of symmetry drives the development of this algorithm and shows that symmetric shape comparison methods tend to have better performance in comparing and aligning objects,” says Joel Haas, distinguished professor in the Department of Mathematics at the University of California at Davis, who was not involved with this work. “Alignments based exclusively on surface data can lead to collapsed volumes, as occasionally happened to Wile E. Coyote in the ‘Road Runner’ cartoons. A range of experiments shows that the new algorithm has remarkable success in maintaining interior consistency while aligning a pair of 3D objects. It gives a good correspondence throughout the interior as well as on the boundary.”

This research is funded, in part, by the National Institutes of Health, Wistron Corporation, the U.S. Army Research Office, the Air Force Office of Scientific Research, the National Science Foundation, the CSAIL Systems that Learn Program, the MIT-IBM Watson AI Lab, the Toyota-CSAIL Joint Research Center, Adobe Systems, the Swiss National Science Foundation, the Natural Sciences and Engineering Research Council of Canada, and a Mathworks Fellowship.