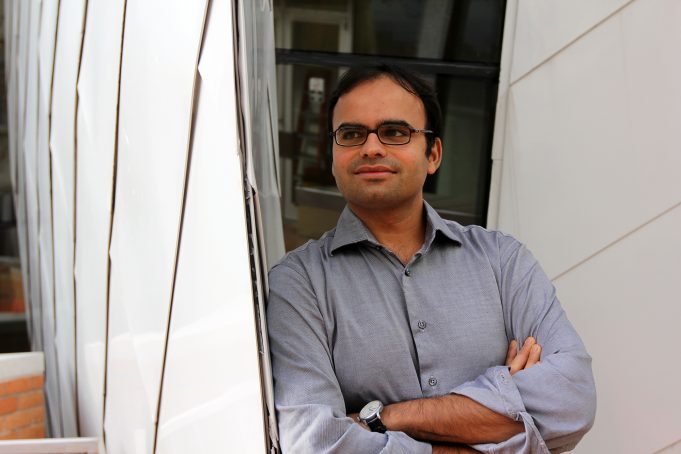

3 Questions: Devavrat Shah on curbing online misinformation

The specter of “fake news” looms over many facets of modern society. Waves of online misinformation have rocked societal events from the Covid-19 pandemic to U.S. elections. But it doesn’t have to be that way, according to Devavrat Shah, a professor in the Department of Electrical Engineering and Computer Science and the Institute for Data, Systems and Society. Shah researches the recommendation algorithms that generate social media newsfeeds. He has proposed a new approach that could limit the spread of misinformation by emphasizing content generated by a user’s own contacts, rather than whatever happens to be trending globally. As Congress and a new presidential administration mull whether and how to regulate social media, Shah shared his thoughts with MIT News.

Q: How does misinformation spread online, and do social media algorithms accelerate that spread?

A: Misinformation spreads when a lie is repeated. This goes back thousands of years. I was reminded last night as I was reading bedtime stories to my 6-year-old, from the Panchatantra fables:

A brahmin once performed sacred ceremonies for a rich merchant and got a goat in return. He was on his way back carrying the goat on his shoulders when three crooks saw him and decided to trick him into giving the goat to them. One after the other, the three crooks crossed the brahmin’s path and asked him the same question – “O Brahmin, why do you carry a dog on your back?”

The foolish Brahmin thought that he must indeed be carrying a dog if three people have told him so. Without even bothering to look at the animal, he let the goat go.

In some sense, that’s the standard form of radicalization: You just keep hearing something, without question and without alternate viewpoints. Then misinformation becomes the information. That is the primary way information spreads in an incorrect manner. And that’s the problem with the recommendation algorithms, such as those likely to be used by Facebook and Twitter. They often prioritize content that’s gotten a lot of clicks and likes — whether or not it’s true — and mixes it with content from sources that you trust. These algorithms are fundamentally designed to concentrate their attention onto a few viral posts rather than diversify things. So, they are unfortunately facilitating the process of misinformation.

Q: Can this be fixed with better algorithms? Or are more human content moderators necessary?

A: This is doable through algorithms. The problem with human content moderation is that a human or tech company is coming in and dictating what’s right and what’s wrong. And that’s a very reductionist approach. I think Facebook and Twitter can solve this problem without being reductionist or having a heavy-handed approach in deciding what’s right or wrong. Instead, they can avoid this polarization and simply let the networks operate the way the world operates naturally offline, though peer interactions. Online social networks have twisted the flow of information and put the ability to do so in the hands of a few. So, let’s go back to normalcy.

There’s a simple tweak that could make an impact: A measured amount of diversity should be included in the newsfeeds by all these algorithms. Why? Well, think of a time before social media, when we may chat with people in an office or learn news through friends. Although we are still exposed to misinformation, we know who told us that information, and we tend to share it only if we trust that person. So, unless that misinformation comes from many trusted sources, it is rarely widely shared.

There are two key differences online. First, the content that platforms insert is mixed in with content from sources that we trust, making it more likely for us to take that information at face value. Second, misinformation can be easily shared online so that we see it many times and become convinced it is true. Diversity helps to dilute misinformation by exposing us to alternate points of view without abusing our trust.

Q: How would this work with social media?

A: To do this, the platforms could randomly subsample posts in a way that looks like reality. It’s important that a platform is allowed to algorithmically filter newsfeeds — otherwise there will be too much content to consume. But rather than rely on recommended or promoted content, a feed could pull most of its content, totally at random, from all of my connections on the network. So, content polarization through repeated recommendation wouldn’t happen. And all of this can — and should — be regulated.

One way to make progress toward more natural behavior is by filtering according to a social contract between users and platforms, an idea legal scholars are already working on. As we discussed, the newsfeed of users impacts their behaviors, such as their voting or shopping preferences. In a recent work, we showed that we can use methods from statistics and machine learning to verify whether or not the filtered newsfeed respects the social contract in terms of how it affects user behaviors. As we argue in this work, it turns out that such contracting may not impact the “bottom line” revenue of the platform itself. That is, the platform does not necessarily need to choose between honoring the social contract and generating revenue.

In a sense, other utilities like the telephone service providers are already obeying this kind of contractual arrangement with the “no spam call list” and by respecting whether your phone number is listed publicly or not. By distributing information, social media is also providing a public utility in a sense, and should be regulated as such.