How quickly do algorithms improve?

MIT scientists show how fast algorithms are improving across a broad range of examples, demonstrating their critical importance in advancing computing.

Algorithms are sort of like a parent to a computer. They tell the computer how to make sense of information so they can, in turn, make something useful out of it.

The more efficient the algorithm, the less work the computer has to do. For all of the technological progress in computing hardware, and the much debated lifespan of Moore’s Law, computer performance is only one side of the picture.

Behind the scenes a second trend is happening: Algorithms are being improved, so in turn less computing power is needed. While algorithmic efficiency may have less of a spotlight, you’d definitely notice if your trusty search engine suddenly became one-tenth as fast, or if moving through big datasets felt like wading through sludge.

This led scientists from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) to ask: How quickly do algorithms improve?

Existing data on this question were largely anecdotal, consisting of case studies of particular algorithms that were assumed to be representative of the broader scope. Faced with this dearth of evidence, the team set off to crunch data from 57 textbooks and more than 1,110 research papers, to trace the history of when algorithms got better. Some of the research papers directly reported how good new algorithms were, and others needed to be reconstructed by the authors using “pseudocode,” shorthand versions of the algorithm that describe the basic details.

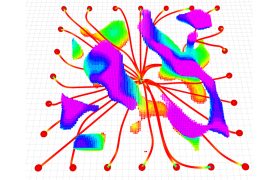

In total, the team looked at 113 “algorithm families,” sets of algorithms solving the same problem that had been highlighted as most important by computer science textbooks. For each of the 113, the team reconstructed its history, tracking each time a new algorithm was proposed for the problem and making special note of those that were more efficient. Ranging in performance and separated by decades, starting from the 1940s to now, the team found an average of eight algorithms per family, of which a couple improved its efficiency. To share this assembled database of knowledge, the team also created Algorithm-Wiki.org.

The scientists charted how quickly these families had improved, focusing on the most-analyzed feature of the algorithms — how fast they could guarantee to solve the problem (in computer speak: “worst-case time complexity”). What emerged was enormous variability, but also important insights on how transformative algorithmic improvement has been for computer science.

For large computing problems, 43 percent of algorithm families had year-on-year improvements that were equal to or larger than the much-touted gains from Moore’s Law. In 14 percent of problems, the improvement to performance from algorithms vastly outpaced those that have come from improved hardware. The gains from algorithm improvement were particularly large for big-data problems, so the importance of those advancements has grown in recent decades.

The single biggest change that the authors observed came when an algorithm family transitioned from exponential to polynomial complexity. The amount of effort it takes to solve an exponential problem is like a person trying to guess a combination on a lock. If you only have a single 10-digit dial, the task is easy. With four dials like a bicycle lock, it’s hard enough that no one steals your bike, but still conceivable that you could try every combination. With 50, it’s almost impossible — it would take too many steps. Problems that have exponential complexity are like that for computers: As they get bigger they quickly outpace the ability of the computer to handle them. Finding a polynomial algorithm often solves that, making it possible to tackle problems in a way that no amount of hardware improvement can.

As rumblings of Moore’s Law coming to an end rapidly permeate global conversations, the researchers say that computing users will increasingly need to turn to areas like algorithms for performance improvements. The team says the findings confirm that historically, the gains from algorithms have been enormous, so the potential is there. But if gains come from algorithms instead of hardware, they’ll look different. Hardware improvement from Moore’s Law happens smoothly over time, and for algorithms the gains come in steps that are usually large but infrequent.

“This is the first paper to show how fast algorithms are improving across a broad range of examples,” says Neil Thompson, an MIT research scientist at CSAIL and the Sloan School of Management and senior author on the new paper. “Through our analysis, we were able to say how many more tasks could be done using the same amount of computing power after an algorithm improved. As problems increase to billions or trillions of data points, algorithmic improvement becomes substantially more important than hardware improvement. In an era where the environmental footprint of computing is increasingly worrisome, this is a way to improve businesses and other organizations without the downside.”

Thompson wrote the paper alongside MIT visiting student Yash Sherry. The paper is published in the Proceedings of the IEEE. The work was funded by the Tides foundation and the MIT Initiative on the Digital Economy.